GPT-4: what is OpenAI's most advanced AI, how does it work and what's new?

The world has been exploring the possibilities of artificial intelligence (AI) since the 1950s, when mathematician Alan Turing laid the foundations for the discipline with his paper 'Computing Machinery and Intelligence'.

However, in seven decades of development, the widespread feeling that AI is advancing by leaps and bounds has never been so present. One of those responsible for this reality has been OpenAI, which at the end of last year launched ChatGPT-3.5.

For the first time in history, we had access to a chatbot capable of holding conversations in natural language, understanding context and generating plausible texts on almost any topic. And, one factor we can't overlook, without a subscription in between.

We were witnessing the massive deployment of a disruptive tool at the heart of which was GPT-3.5, one of the world's most advanced AI languages. What we didn't know was that its creators were already working on an evolution called GPT-4.

What is GPT-4?

GPT-4 is the name of the latest pre-trained language model from OpenAI, an artificial intelligence company that has previously released other versions of the model, GPT (2018), GPT-2 (2019), GPT-3 (2020) and GPT-3.5 (2022) (more on the evolution of Chat GPT in this article), and is also behind another popular tool, the DALL·E image generator.

GPT-4's capabilities are directly related to language and its multimodal essence. It is capable of performing with amazing accuracy tasks such as text generation, summarization, translation, complex question answering and more.

The most notable leap in this new version comes from its ability to achieve "human performance" in some scenarios. Its responses and interactions are more accurate and coherent, two elemental characteristics when performing in different fields.

How can I try GPT-4?

You may be wondering if you can already try this new model, and the answer is yes. GPT-4 is now available to everyone. Specifically, we have two ways to experiment with the latest from OpenAI:

The first, subscribe to the ChatGPT Plus service, which is priced at $20 per month, and which allows us to interact with the options of the new engine. We will still be able to use the basic, free version of ChatGPT, although it should be noted that this works with GPT-3.5.

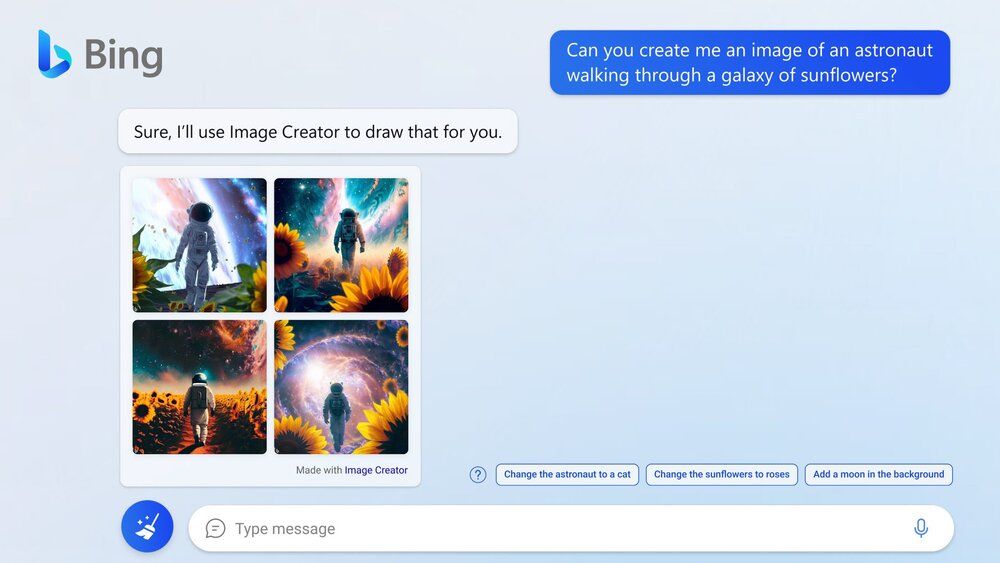

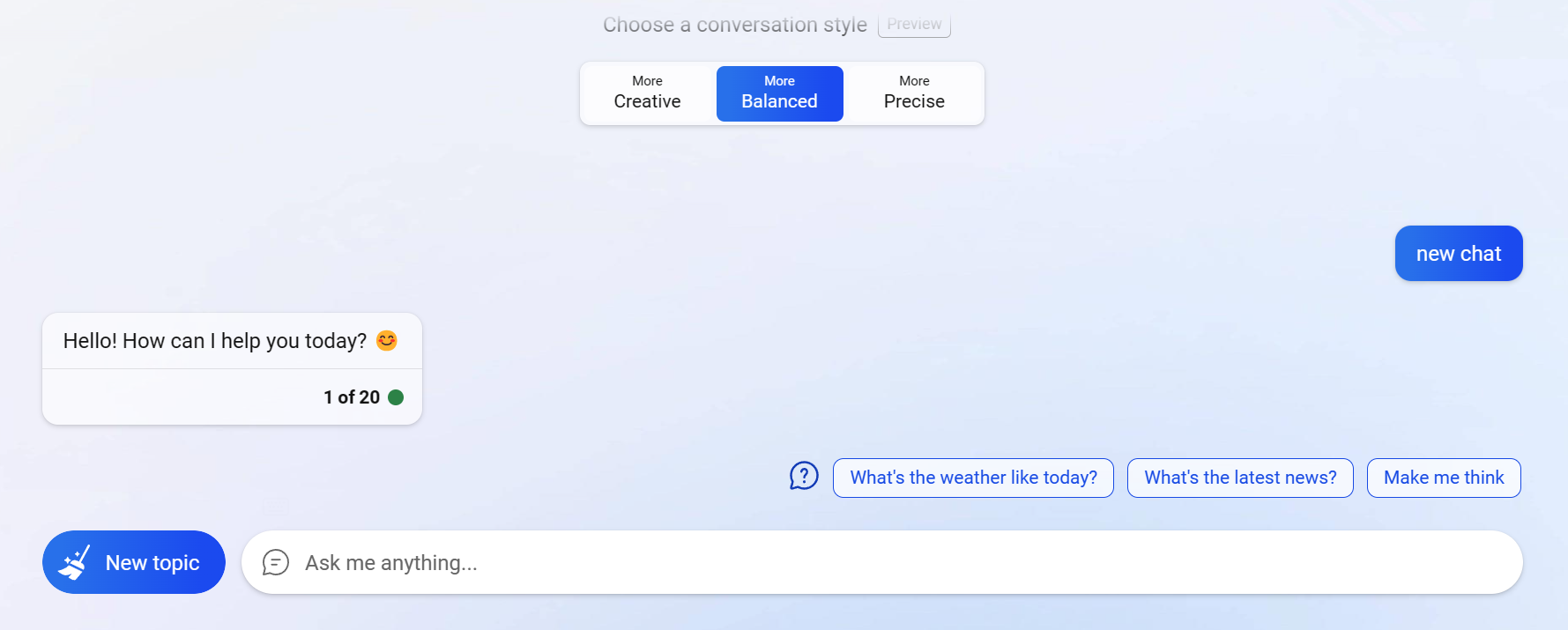

The second is to use Bing with ChatGPT, Microsoft's new search engine that includes a conversational chatbot connected to the Internet. This engine is also based on GPT-4 and can be used for free on mobile and desktop devices, although we will have to request access to the service.

How does GPT-4 work?

GPT-4, like the previous models, itself is a language with the potential to work in different systems and applications through its API. GPT-3, for example, has been implemented in commercial applications such as Jasper Ai and Canva Docs word processors.

GPT-3.5, the version responsible for popularizing advanced conversational chatbots, has surprised us with ChatGPT. GPT-4 goes beyond writing assistants, machine translation and chatbots to voice assistants and even search engines.

Internally, GPT-4 has been trained with datasets with large amounts of data that have been used to learn and generate language similar to that used by humans. Behind this model is a processing technique known as "Transformer".

The goal of this architecture, presented by Google in 2017, is to innovate in the implementation of layers that allow the model to be adapted to be effective and efficient in different tasks. OpenAI, in its GPT models, has used it to implement several layers.

The Transformer architecture, through its layers, converts each word into a numeric vector that allows the model to process the text mathematically, takes care of processing it through a neural network and "pays attention" to understand it.

Image from TechTalks: Machine learning: What is the transformer architecture?

But it's not just about layers. A large number of parameters are also involved in GPT models. These are shaped during the machine learning process and, in theory, are directly related to the performance and accuracy of the model.

How is it different from GPT-3?

According to OpenAI documentation, GPT-3 has 12 layers and 175 billion parameters. The main difference between OpenAI's latest model and its evolution, Wired reports, could be in the parameters. GPT-4 may have been trained with 100 billion parameters, almost 600 times more than its predecessor.

It should be noted that since the company led by Sam Altman has begun to move away from the non-profit concept that saw its birth, it has chosen not to disclose certain technical details. In this sense, the GPT-4 paper does not point out minute details such as the number of parameters that have been used in its training.

Another major difference is its "multimodal" nature. Instead of working only with text, GPT-4 will also be able to support images as input, although this is not currently available. As promised, we will be able to upload images to provide visual cues. However, the results will always be presented in textual format.

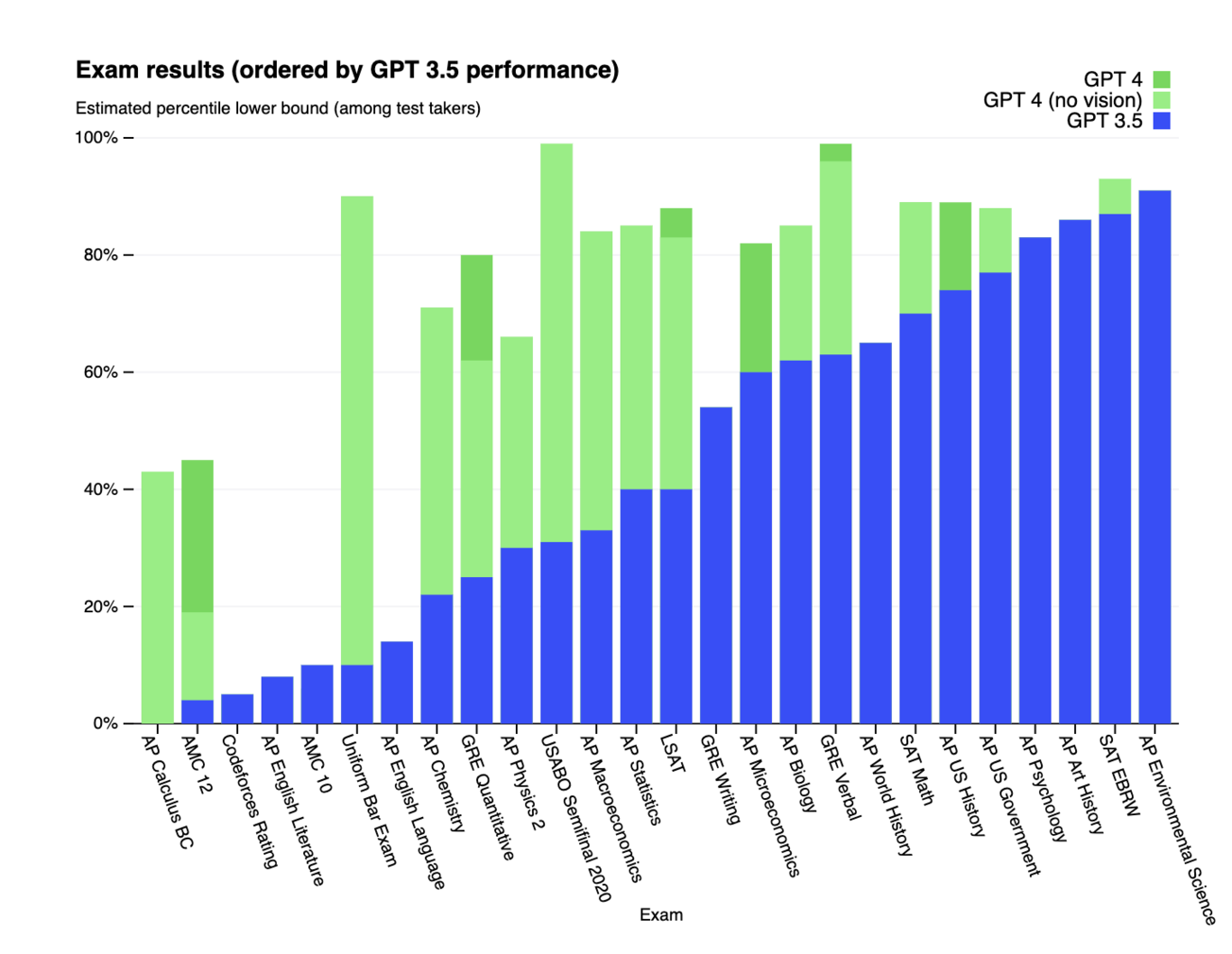

In addition, the model takes a huge leap in performance. To measure its capabilities, the company exposed it to tests designed specifically for humans, but without making specific adjustments to pass them. As it points out in a paper, GPT-4 passed the tests with flying colors, achieving better results than GPT-3.5.

Does Bing really work with GPT-4?

In early February, Microsoft revamped Bing with a new search engine and chat feature by using a combination of in-house and OpenAI technologies. The question after the launch event was whether we were finally seeing GPT-4 in action. That question has indeed been answered. Bing does indeed run on GPT-4.