The origin and evolution of Chat GPT: The natural language model that is changing the game

Chat GPT is a natural language model developed by OpenAI, an artificial intelligence research organization based in San Francisco, California. The model was developed in 2018 as an improved version of the original "GPT-1" model and has been updated and improved several times since then.

In this article, we will explore in detail the origin of Chat GPT, its background and how it has evolved to become one of the most advanced and popular language models today.

What is Open AI, the organization behind Chat GPT?

OpenAI is an artificial intelligence research organization founded in 2015 by a group of well-known entrepreneurs, researchers and technology experts. OpenAI is a non-profit organization, so it does not have an owner in the traditional sense of the word. Instead, it is run by a leadership team that includes its CEO, Sam Altman, and its CTO, Greg Brockman. In addition, OpenAI has a Board of Directors that oversees its direction and strategy.

While OpenAI does not have a principal owner or shareholder, it has a variety of investors and backers, both private sector and government. These investors include Elon Musk, Reid Hoffman, Khosla Ventures, Peter Thiel, Microsoft, Amazon Web Services, the U.S. Department of Energy, and the U.S. Office of Naval Research, among others.

Today, OpenAI is a world-renowned artificial intelligence research organization that has made significant advances in the field of AI. The organization has developed several highly advanced natural language models, such as the famous GPT-3, which is capable of generating coherent and compelling text in a variety of languages.

OpenAI has also created artificial intelligence systems capable of playing video games competitively, and has even developed robots that can learn to perform physical tasks through observation and practice.

The origin of Open AI

The Origin of OpenAI is a story that spans several years and has involved a variety of influential people in the technology world. Below is a summary chronology of the most important events related to the founding of OpenAI:

- December 2013: Elon Musk, Sam Altman and other entrepreneurs meet to discuss the potential of artificial intelligence and concerns about its impact on society.

- June 2015: Musk makes a $10 million donation to support artificial intelligence research at the University of California, Berkeley.

- July 2015: Altman, Musk and other entrepreneurs meet to discuss the creation of a nonprofit organization to advance the responsible development of artificial intelligence.

- December 2015: OpenAI is founded with an initial $1 billion donation from Musk and other investors. The organization's founders include Musk, Altman, Greg Brockman, Ilya Sutskever, John Schulman and Wojciech Zaremba.

The origin of Chat GPT

Background: Pre-trained Language Models.

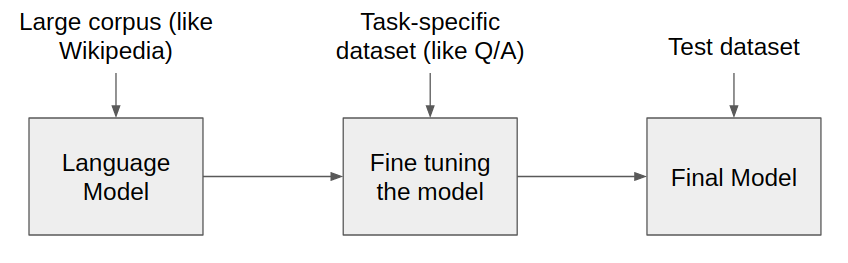

Pre-trained language models are a type of artificial intelligence model that is trained on large amounts of text to understand natural language. These models can be used for tasks such as text classification, text generation, and language translation, among others.

One of the first pre-trained language models was the Transformer language model (Transformer), developed by Google in 2017. This model used a neural network architecture designed to understand natural language in context and produce accurate and useful responses.

The success of Transformer inspired the development of more advanced pre-trained language models, such as GPT-1, which was released by OpenAI in 2018.

GPT-1: The precursor model to Chat GPT.

GPT-1, short for "Generative Pre-trained Transformer," was OpenAI's first pre-trained language model. This model was trained on a 40 GB text corpus that included Wikipedia, books, and websites. The goal was for the model to learn to understand natural language in context and generate accurate and useful responses.

GPT-1 was an important milestone in the development of pre-trained language models, as it demonstrated the ability of these models to understand and generate high-quality text in a variety of situations and contexts. However, the model had some limitations, including a lack of ability to understand the context and coherence of conversations.

GPT Chat: The Improved Model

To improve the GPT-1 model, OpenAI released an improved version in 2018 called "GPT-2." This model was trained on a 40 GB text corpus that included over 8 million web pages and was able to generate more accurate and consistent responses than GPT-1.

However, GPT-2 was a massive and complex model that required a lot of computing power and resources to train and use. Therefore, OpenAI decided to release a smaller and more efficient version of GPT-2 called Chat GPT.

Chat GPT was trained on a smaller corpus of text than GPT-2, but was still able to generate accurate and consistent responses in real time. The model was designed specifically for online communication and to provide a more natural and effective chat experience.

The Chat GPT model uses a transformational neural network architecture that is trained on large amounts of text to understand natural language in context and generate accurate and useful responses. The model can be customized to adapt to different situations and contexts. The model can be customized to suit different situations and contexts and has the ability to continuously learn and adapt to improve its performance.

How Chat GPT works

Chat GPT uses a text generation technique known as "autoregression," which involves the model generating text sequentially, one word at a time. The model uses a transformational neural network to understand the context and semantics of the conversation and generate a coherent and useful response.

The model also uses an attention algorithm, which allows the model to focus on specific parts of the input text to better understand the context and generate more accurate responses.

Chat GPT also has the ability to continuously learn and adapt through a process called "transfer training," which involves the model being trained on new tasks and data to improve its performance and accuracy.

Uses of Chat GPT

Chat GPT is used in a variety of applications, from customer service chatbots to virtual personal assistants. The model is particularly useful for automating tasks that require natural language understanding and generation, such as language translation, speech-to-text transcription, and text summary generation.

The model is also used for research and development of new artificial intelligence technologies, such as speech recognition and computer vision.

Limitations and challenges

Although Chat GPT is an advanced and effective language model, it still has some limitations and challenges. One of the biggest challenges is the model's ability to understand the context and consistency of conversations.

The model may also be susceptible to biases and prejudices in the training text, which may affect its ability to generate accurate and useful responses in certain situations.

Another challenge is data security and privacy. The model may collect and store users' personal and sensitive information, which may raise concerns about data security and privacy.

Future improvements and expectations for Chat GPT in 2023

Despite these challenges, Chat GPT is expected to continue to evolve and improve in the near future. Some expected improvements include:

- Personalization: Chat GPT can be customized to suit different situations and contexts, but is expected to become even more customizable in the future to meet the specific needs of users and businesses.

- Sentiment detection: Chat GPT is expected to be able to detect and respond to user sentiment, which would enable a more effective and personalized chat experience.

- Integration with other technologies: Chat GPT is expected to integrate with other technologies, such as computer vision and speech recognition, to provide a more advanced and effective chat experience.

- Security and privacy: more advanced security and privacy measures are expected to be implemented to protect users' personal and confidential data.

Chat GPT is an advanced and effective natural language model that has been developed through years of research and development in artificial intelligence. The model has the ability to understand and generate natural language in context and is used in a variety of applications, from customer service chatbots to virtual personal assistants.

Although the model has some limitations and challenges, it is expected to continue to evolve and improve in the near future, with improvements in personalization, sentiment detection, integration with other technologies, and security and privacy.